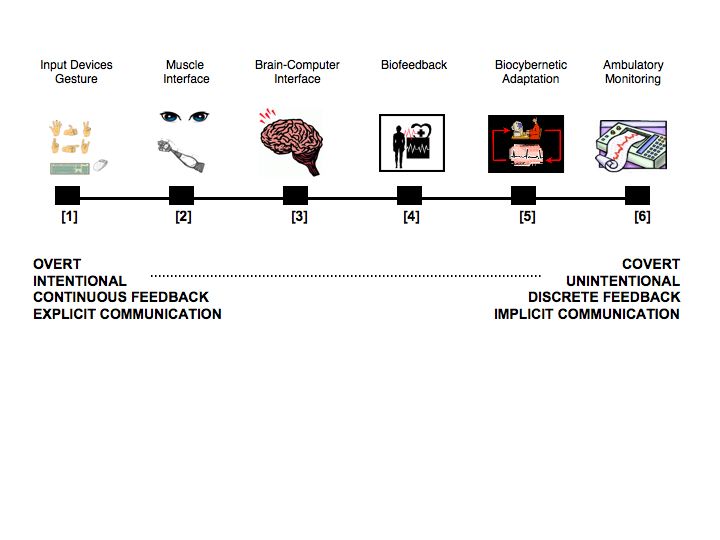

I just watched this cool presentation about blogging self-report data on mood/lifestyle and looking at the relationship with health. My interest in this topic is tied up in the concept of body-blogging (i.e. recording physiological data using ambulatory systems) – see earlier post. What’s nice about the idea of body-blogging is that it’s implicit and doesn’t require you to do anything extra, such as completing mood ratings or other self-reports. The fairly major downside to this approach comes in two varieties: (1) the technology to do it easily is still fairly expensive and associated software is cumbersome to use (not that it’s bad software, it’s just designed for medical or research purposes), and (2) continuous physiology generates a huge amount of data.

For the individual, this concept of self-tracking and self-quantifying is linked to increased self-awareness (to learn how your body is influenced by everyday events), and with self-awareness comes new strategies for self-regulation to minimise negative or harmful changes. My feeling is that there are certain times in your life (e.g. following a serious illness or medical procedure) when we have a strong motivation to quantify and monitor our physiological patterns. However, I see a risk of that strategy tipping a person over into hypochondria if they feel particularly vulnerable.

At the level of the group, it’s fascinating to see the seeds of a crowdsourcing idea in the above presentation. Therefore, people self-log over a period and share this information anonymously on the web. This activity creates a database that everyone can access and analyse, participants and researchers alike. I wonder if people would be as comfortable sharing heart rate or blood pressure data – provided it was submitted anonymously, I don’t see why not.

There’s enormous potential here for wearable physiological sensors to be combined with self-reported logging and both data sets to be combined online. Obviously there is a fidelity mismatch here; physiological data can be recorded in milliseconds whilst self-report data is recorded in hours. But some clever software could be constructed in order to aggregate the physiology and put both data-sets on the same time frame. The benefit of doing this for both researcher and participant is to explore the connections between (previously) unseen patterns of physiological responses and the experience of the individual/group/population.

For anyone who’s interested, here’s a link to another blog site containing a report from an event that focused on self-tracking technologies.