The biocybernetic loop is the underlying mechanic behind physiological interactive systems. It describes how physiological information is to be collected from a user, analysed and subsequently translated into a response at the system interface. The most common manifestation of the biocybernetic loop can be seen in traditional biofeedback therapies, whereby the physiological signal is represented as a reflective numeric or graphic (i.e. representation changes in real-time to the signal).

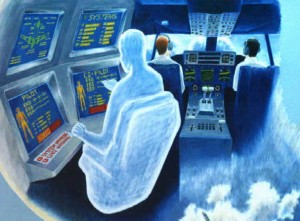

In the 90’s a team at NASA published a paper that introduced a new take on the traditional biocybernetic loop format, that of biocybernetic adaptation, whereby physiological information is used to adapt the system the user is interacting with and not merely reflect it. In this instance the team had implemented a pilot simulator that used measures of EEG to control the auto-pilot status with the intent to regulate pilot attentiveness.

Dr. Alan Pope was the lead author on this paper, and has worked extensively in the field of biocybernetic systems for several decades; outside the academic community he’s probably best known for his work on biofeedback gaming therapies. To our good fortune we met Alan at a workshop we ran last year at CHI (a video of his talk can be found here) and he kindly allowed us the opportunity to delve further into his work with an interview.

So follow us across the threshold if you will and prepare to learn more about the origins of the biocybernetic loop and its use at NASA along with its future in research and industry.

Continue reading