Holidays and arcades are one of my traditions. Come every holiday I hole up in the nearest arcade and play games until my fingers go numb, usually from the re-coil of the light-gun games. Sadly, in my experience, arcade culture in the UK has diminished significantly as the novelty and variety of yesteryear is simply not there any more. Most arcades tend to host a mixture of dated racing and light-gun games (I’m looking at you Time Crisis), which, while were fun at the time have lost their charm. During my recent holiday, much to my surprise, I came across a brand new arcade game which really piqued my interest: Dark Escape 4D by Namco.

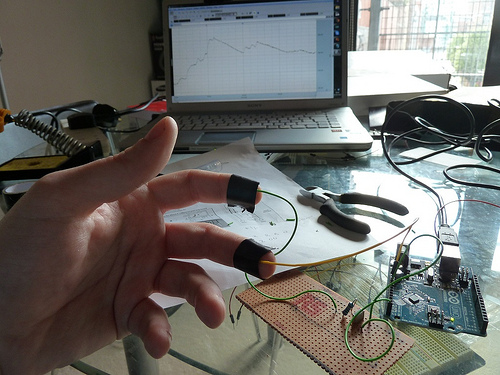

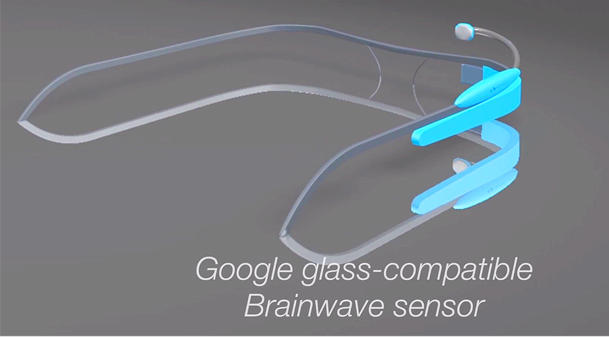

And why did this game catch my attention so, well because it was a biofeedback game, a biofeedback game at the ARCADE!